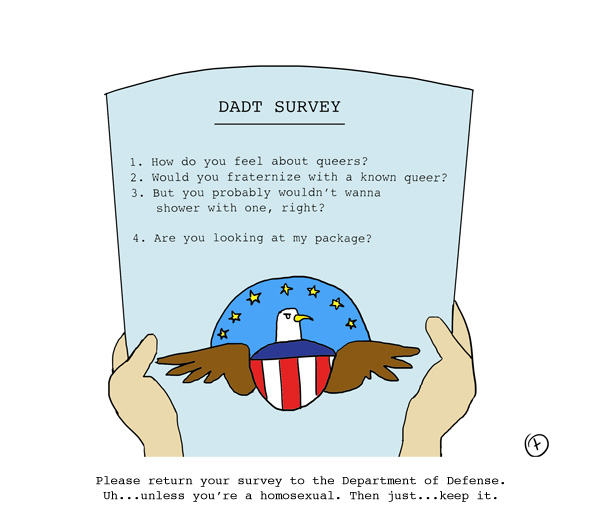

There’s already been a ton of controversy around the Don’t Ask Don’t Tell survey. You think you know how bad it is?

You have no idea.

taylormade doodle made by taylor

Pending continued interest/a non-dead horse, we’ll be breaking this shit down for you next week using our in-house Statistical Expert (by default, Intern Hot Laura, ’cause uh, she’s a Soc Major and has some textbooks handy) and Our In-House Investigative Journalist (by default, recent Journalism Graduate Sarah because she’s not afraid of the telephone) …. but we really can’t hold out that long to at least begin digging into the worst Survey we’ve ever seen.

Yes, our personal background in Survey and Research Methodology is relatively shoddy. My U-Mich Statistics 350 course convened at 10 AM and my teacher’s voice grated my soul, therefore I made the executive decision to never attend class and take it pass/fail (thus securing my stellar GPA). Meanwhile in Missouri, Sarah’s teacher stopped holding her Statistics Class after three weeks because he declared himself unfit to teach it. Everyone got an “A.” Oh, the midwest.

However. Had we kept up with our coursework and perhaps been asked to submit a survey of our own using the principles learned in Sociology and Statistics, we have a 95% confidence interval that we would’ve come up with something WAY BETTER THAN THIS.

Furthermore, we’ve got a shit-ton of probability density that this DADT survey would’ve earned the U.S. Government an F. Alexander Nicholson, executive director of Servicemembers United, agrees:

“The survey isn’t even just slightly bad. It’s far more skewed than we even expected it to be, given the working group’s commitment to staying neutral.”

The Pentagon has responded to the accusations of bias by saying that those things are redic, because I mean c’mon, they hired an outside firm. But um… far be it from us to ever defend a giant corporation with massive influence over government policy but the outside firm they hired, Westat, appears more or less impenetrable. Founded in 1963, the Maryland-based employee-owned company has conducted thousands of surveys for the government and other bodies with relatively stalwart methodology. Its only lobbying dollars have gone towards, predictably, funding for a survey on Children’s Health, which doesn’t scream SKETCHY to anyone.

In fact, Westat has historically been one step ahead of the government — like in 1999, when the White House National Drug Control Policy solicited a $42.7 million study about anti-drug campaigns and education from Westat. The results of the November 1999 –> June 2004 study were that anti-drug campaigns weren’t working. As explained in Slate:

Five years and $43 million to show that a billion-dollar ad campaign doesn’t work? That’s bad. But perhaps worse, and as yet unreported, NIDA and the White House drug office sat on the Westat report for a year and a half beginning in early 2005—while spending $220 million on the anti-marijuana ads in fiscal years 2005 and 2006.

If Westat is secretly populated by biased conservatives, they’ve gone remarkably unchecked for quite some time.

Regardless, someone f*cked up big time, and here’s one example of exactly how they did that.

DADT Survey: All About Leading Questions & Response Bias

+

EXHIBIT A:

[click to enlarge]

As this handy online crash-course in AP Stats explains, this is “Response Bias” because it is a “Leading Question.”

Leading questions. The wording of the question may be loaded in some way to unduly favor one response over another. For example, a satisfaction survey may ask the respondent to indicate where she is satisfied, dissatisfied, or very dissatisfied. By giving the respondent one response option to express satisfaction and two response options to express dissatisfaction, this survey question is biased toward getting a dissatisfied response.

By giving the respondent four options for dissatisfaction/discomfort, one option for neutrality, zero options for comfort, one option for who-knows-what and one for “whatever,” this survey question is biased toward getting a dissatisfied response.

EXHIBIT B:

Language, people, language! This question tells the survey-taker how to feel about living near/with service-members by labeling it a “situation” (defined as a “condition, case or plight”) which needs to be “handled.” “Living with a homo” is officially qualified before the question is even asked — a question that, perhaps, HAS NOTHING TO DO WITH ANYTHING EVER.

And again, we have three options for dissatisfaction/discomfort, one for neutrality, one option for who-knows-what and one for “whatever.” So this question is SUPER biased toward getting a dissatisfied response.

EXHIBIT C:

This one’s especially special for the giant gulf of responses between “varying levels of discomfort” and “soooooooo comfortable that I actually want to be friends.” Let’s not even touch the phrase “like any other neighbors” which is a subjective qualification (e.g., I hate other people and therefore ignore my neighbors, so for ME that answer would be a negative response) and just note that there’s no neutral answer here. Oh also, from that AP Stats crash-course again, about response bias?

Social desirability. Most people like to present themselves in a favorable light, so they will be reluctant to admit to unsavory attitudes or illegal activities in a survey, particularly if survey results are not confidential. Instead, their responses may be biased toward what they believe is socially desirable.

In order to balance out the responses, we have re-written the question. Please note that responses are listed in order from very comfortable to very uncomfortable, ending with the apathy and the mysterious “something else” (which, at least in my case, would be “inquire about possibilities for an erotic third,” but for other soldiers might be “continue sexually harassing my female co-servicemembers, just like always”), just like we would’ve learned to do if we’d gone to class.

AUTOSTRADDLE REWRITE:

Good Luck!

This is amazing and needs to become an internet sensation ASAP, especially the re-writes. Like that Double Rainbow video but more dignified.

And who needs statistics class when you have Autostraddle?

Thanks so much for this! Love the final question re-write!

I would love to take a full version of the survey…. in rewrites. Just for fun.

Phenomenal rewrite! :)

Tasha loves this!!!! I do too. Soooooo glad that survey leaked, right? It’s just GROSS all the INJUSTICE. Such hypocrites.

Awesome rewrite! I think that we should replace our current government with an Autostraddle-ocracy. The country would be a better place and we’d have people from all over the world wanting to become citizens! Party in the Rainbow House!

oh my cod. the rewrite! i can’t wait to get my hands on this baby.

i’m not gonna lie, i kinda stopped reading this to *swoon* when you said Intern Hot Laura is a Soc Major.

this statistics talk makes me freak out because i am trying to write this research paper for publication and remember things about statistics and i probably can’t and won’t

cue night sweats

“By giving the respondent four options for dissatisfaction/discomfort, one option for neutrality, zero options for comfort, one option for who-knows-what and one for “whatever,” this survey question is biased toward getting a dissatisfied response.”

Yes.

I can’t even believe this survey exists at all. I refuse to.

I was kinda pissed off until the last bit, when I started laughing my head off and my brother was like, “WTF, KZ?”

I just read the whole survey and while I was a soc major and have been paid to write surveys and formulate questions for focus groups and interviews at points in my life…probably everything I want to say about this has already been said as it’s a well done “What Not To Do” guide and I’m still busy fuming over it.

My only contribution is to suggest that people take to the phone lines and bombard the Westat telephone number they’ve thoughtfully put on the survey in case you need help or have questions about it: 888-491-2083.

From what I saw, the re-write gave more realistic options. And it was statistically less biased. I vote AutoStraddle is the governmet’s third party survay writer from now on.

I second that!

Wait, can I vote if I’m not from the States?

they should send this survey to my roommate and wait for what she has to tell them.

now, she is so straight, if she’d ever kiss a woman she would have to pay for my new world view. now, how does she feel about sharing an apartment with an openly gay roommate? she runs around half-naked 90 percent of the time, we have no restrictions for the other one to enter the bathroom if the other one’s showering and I don’t think there is a pair of breasts I have seen more often in the last couple of months than hers.

and why’s that? right, because some people feel confident and are sure about there own sexuality and don’t have to blame gay people for always looking our making their lives generally uncomfortable.

she is super cool with me being a lesbian, still I don’t know how someone could possibly NOT think about an uncomfortable situation if asked these questions.

what purpose does this serve? finding out that a bunch of people would feel uncomfortable showering next to a bunch of other people? will there be separate bathrooms?

will people now ask everyone who enters some sort of public bathroom what their sexual orientation is?

Why is this not an internet sensation yet? Why are more people not freaking the out?!

Stuff like this really scares me in the same way that Bush being reelected scared me. That is to say crying for the majority of 1st period in 9th grade. Just when you think the government MIGHT be making progress, it pulls stuff like this.

To quote Ted Leo, the man who got me through the 2nd term of GW BUSH,

“Won’t you take me where my feet feel happy in their own time

And the Cathedral of Reason lets the bells chime, and the lighting is fine?

I’m old enough to know that people waiting on some big sign

Should quit their waiting on the Divine. Divine is what’s in your mind

I’ve seen the cruel and hard, and I’ve seen them hard on you

But I’ll buy you brand new shoes if you cross to my side-

there’s a whole lot of walking to do”

We just have to keep walking!

You know what’s the most wrong with these questions? The word “if.”

One day, they will realize equality and progress is an inevitability, as long as we keep fighting for it.

So I repeat, it should be, “When DADT is repealed…”

Don’t know. I have no legs.

Guys, I scared my cat. I was laughing that hard. Oh lord.

But 4 realz. Riot time, yes?